The SEO industry has a new obsession: tracking AI prompts the way we used to track Google keywords.

AI Prompt Tracking: Why Your Data is Useless (And How to Fix It)

You know the old drill. Build a massive list. Categorize by topic. Calculate Share of Voice. Report monthly.

The new problem is, that methodology produces metrics that look actionable but measure nothing useful. LLMs are generative, conversational models. They don’t rank pages in an index. Instead, they construct answers from training weights, retrieval signals, and conversational context. Tracking prompts the way you tracked keywords ignores all three of those mechanics.

The result is numerous dashboards full of structural noise dressed up as competitive intelligence.

The Assure-Mention Effect

Consider a prompt like “Brand A vs Brand B for enterprise HR teams.” If you are tracking this prompt for Brand A, an LLM will mention you in close to 100% of responses. Your name appears in the prompt. The model will mention it to fulfill conversational intent. That 50% Share of Voice (SOV) score on your tracking dashboard? It reflects syntax, not preference. The AI simply answered the question it was asked.

This is the Assure-Mention Effect. Any prompt containing your brand name will produce inflated mention rates. Blend those into your overall SOV and you create a number that looks strong in a quarterly review but tells your content team nothing about where to focus.

An Intent-First Framework for AI Prompt Tracking

The fix is to stop sorting prompts by topic (“branded,” “use-case,” “comparison”) and start sorting them by what the user’s intent reveals about the value of an AI mention.

Discovery intent

A user asks “How do I choose cycling apparel for long-distance rides?” If an LLM names your brand here, it did so without prompting. The model associated you with the problem space based on its training data and retrieval index. This is organic AI mindshare, and it’s the hardest metric to move.

A 0% SOV at this level isn’t a failure. It’s a diagnostic: you lack awareness-stage presence in the model’s knowledge base.

Consideration intent

A user asks “Best project management apps for remote HR teams.” To answer this, the LLM will build a shortlist. Volume of mentions matters less than positioning. Are you the enterprise option or the budget alternative? Are you first in the list or an afterthought at the bottom?

At this tier, you track rank and framing, not raw mention count.

Conversion intent

A user asks “How much does Brand A’s enterprise plan cost?” Your SOV will be 100% by definition. The only metric that matters here is accuracy. Is the LLM returning current pricing? Correct feature descriptions? Valid integration partners? Inaccurate responses at this stage cost you closed deals.

The Mention-to-Citation Leakage Gap

In traditional search, ranking drove traffic. In AI search, that link is broken. An LLM can write three paragraphs recommending your product and never link to your website. To measure commercial value from AI visibility, you need to separate two distinct outputs:

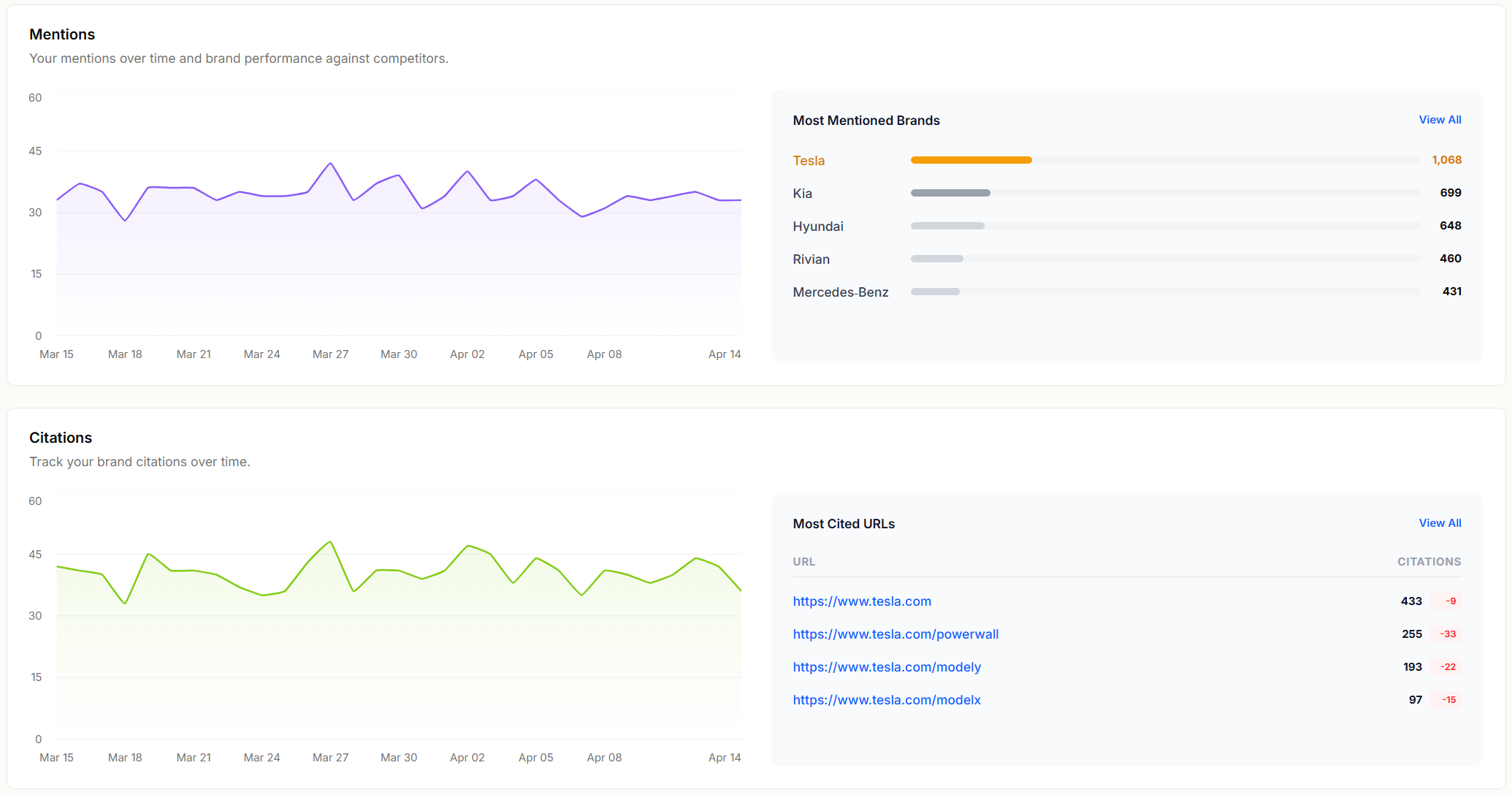

A mention is when the AI outputs your brand name. Mentions are driven by semantic association in training weights. They build awareness but generate zero referral traffic.

A citation is when the AI generates a clickable link to your domain. Citations are driven by Retrieval-Augmented Generation. The model searched its real-time index and trusted your URL as the authoritative source.

Mention-to-Citation Leakage Gap

The gap between these two numbers reveals your biggest AEO vulnerability. If you hold a 95% mention rate on a topic cluster but only a 10% citation rate, your brand marketing did its job. The LLM knows you belong in the answer, but the model doesn’t trust your domain enough to link to it. Instead, it cites a third-party aggregator, a publisher review, or a Reddit thread to back up the claim it made about you.

High mention rates with low citation rates mean you’re building demand and leaking the resulting traffic to someone else’s domain.

Semantic Sentiment: The Metric That Explains the Tie

In comparison prompts where SOV is structurally guaranteed to hover near 50/50, the number itself tells you nothing. The variable that determines which tool the user picks is the language the AI uses to describe each option.

Semantic sentiment in AEO goes beyond positive, neutral, or negative. It maps the specific modifiers the model attaches to each brand: Which criteria does the AI say you win on? Which use cases does it assign to your competitor? When the AI recommends a competitor over you, what adjectives does it use: “expensive,” “complex,” “outdated,” or “limited”?

That modifier map gives your content team a specific brief. If the model calls your product “complex,” you need content that demonstrates simplicity. If it assigns “enterprise” to your competitor but “small team” to you, you need case studies and documentation that reframe your positioning at scale.

Reverse-engineering the model’s semantic framing converts a vanity metric into a content strategy.

Putting This Into Practice

Three shifts will clean up your AI visibility reporting overnight:

- Step 1: Sort prompts by intent tier before calculating any aggregate metric. Discovery-intent SOV and comparison-intent SOV measure different things. Blending them produces a number that’s useless for planning.

- Step 2: Track your Mention-to-Citation Leakage Gap for each topic cluster. If the gap is wide, your technical AEO needs work regardless of how strong your mention rate looks.

- Step 3: Extract the semantic modifiers from comparison responses. Build content briefs that target the specific language the model uses to describe your weaknesses.

We built Operyn to operationalize this AI Prompt Tracking framework. The platform isolates organic mindshare from prompted mentions, tracks citation rates down to the URL, and extracts the semantic modifiers shaping how AI models describe your brand. If you’re moving from keyword tracking to AI visibility, the following Operyn product guide series will walk through each of these capabilities in detail.