Inside the Search Chain: The AI Queries That Determine Your Brand's Visibility

Feature Update: Operyn Fan-out Visibility

Leela Adwani

AI Visibility Researcher and Editor

Update on

Product Mechanics

Most AI visibility tools show you a number. Your brand appeared in 82% of responses this week. Sentiment was positive. The more sophisticated ones layer in competitor tracking: Your competitor is closing the gap on two topics.

You can track that number over time, monitor mention rates, sentiment, share of voice across platforms, all the good stuff. But what the numbers don't give you is the diagnosis and a clear next step. Something shifted, the numbers tell you that much, but connecting the movement to a cause still takes guesswork, experience, or a lot of manual digging.

Fan-out visibility is Operyn's answer to that. It shows you the search queries the AI ran before it responded to your prompt, the part of the process that most visibility tools can't capture, or gate behind enterprise-tier pricing.

What happens before the AI answers

When a user asks an AI a question, very often the model doesn't answer from memory alone. It searches, and rarely just once. It fans out into several queries, each targeting a different dimension of the question: one for recency, one for comparison, one for a specific feature or use case, as a few examples. The results from each shape a different part of the final answer.

Every AI visibility tool on the market measures the output: your brand was mentioned, or it wasn't. Operyn captures what happened before that: the full chain of queries the model ran, the results it pulled, and which searches your content appeared in.

When your brand drops out of a recommendation, the answer is somewhere in that chain. Did the AI search and find a competitor's article that outranked yours? Did it not search at all, meaning your brand isn't strongly represented in training data? Did it search for something you didn't expect, and your content simply wasn't there for that query? Without the chain, all these very different scenarios look identical from the outside.

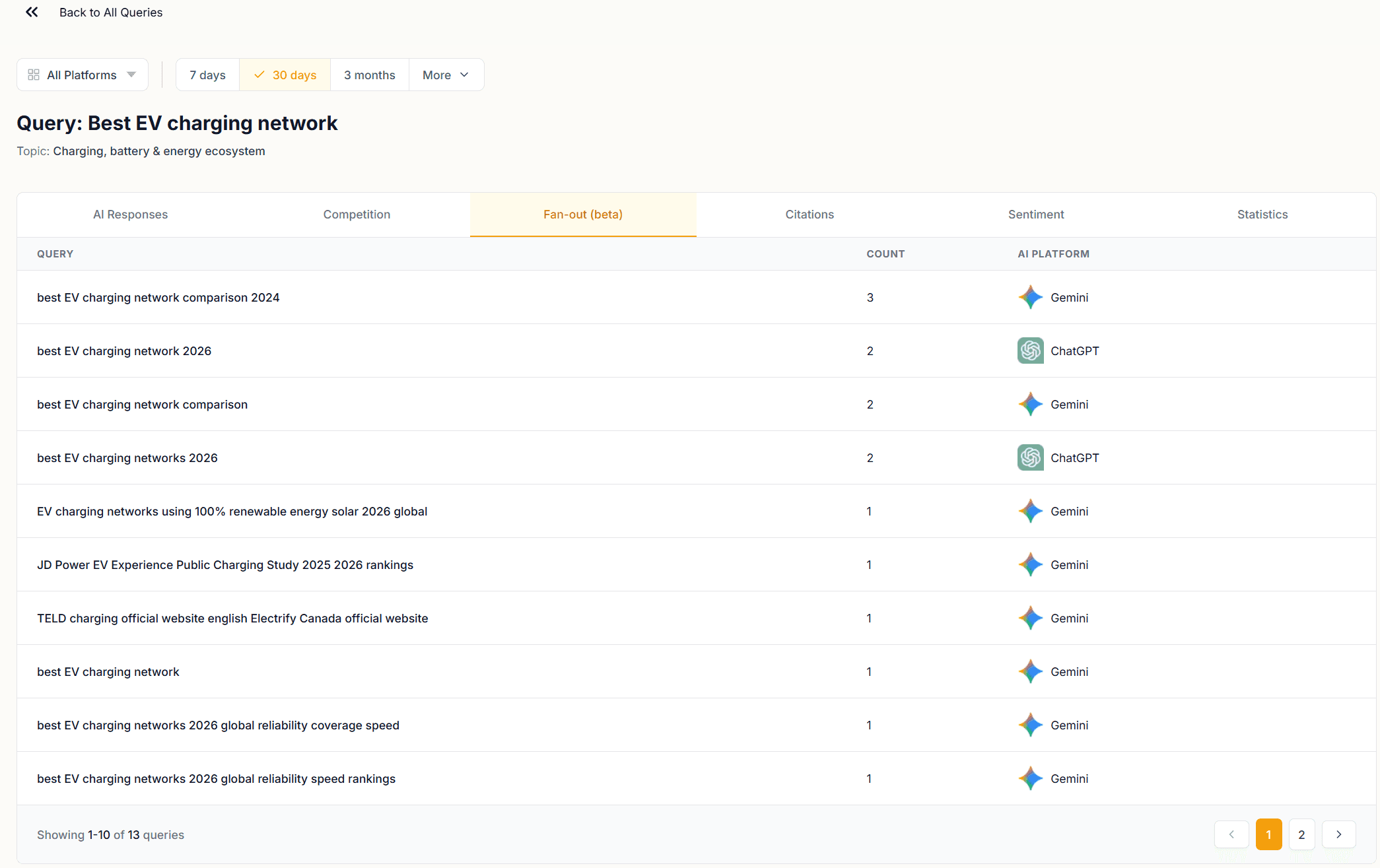

The Fan-out tab in Operyn. Each row is a search query the AI model ran internally before composing its answer.

Take the query "Best EV charging network." Four words. Before responding, Gemini and ChatGPT generated 13 separate search queries, including:

"best EV charging network comparison 2024"

"best EV charging networks 2026 global reliability coverage speed"

"EV charging networks using 100% renewable energy solar 2026 global"

"JD Power EV Experience Public Charging Study 2025 2026 rankings"

"TELD charging official website english Electrify Canada official website"

Those 13 queries tell you what the AI treats as the relevant dimensions for this category: comparison data, reliability and speed rankings, sustainability credentials, third-party studies, regional competitors. If your brand has no content targeting reliability comparisons, or no presence in JD Power coverage, you won't appear in the answer regardless of how well-known you are. A mention rate won't surface that, but the fan-out does.

From scoreboard to strategy

Here's what that looks like when something actually goes wrong.

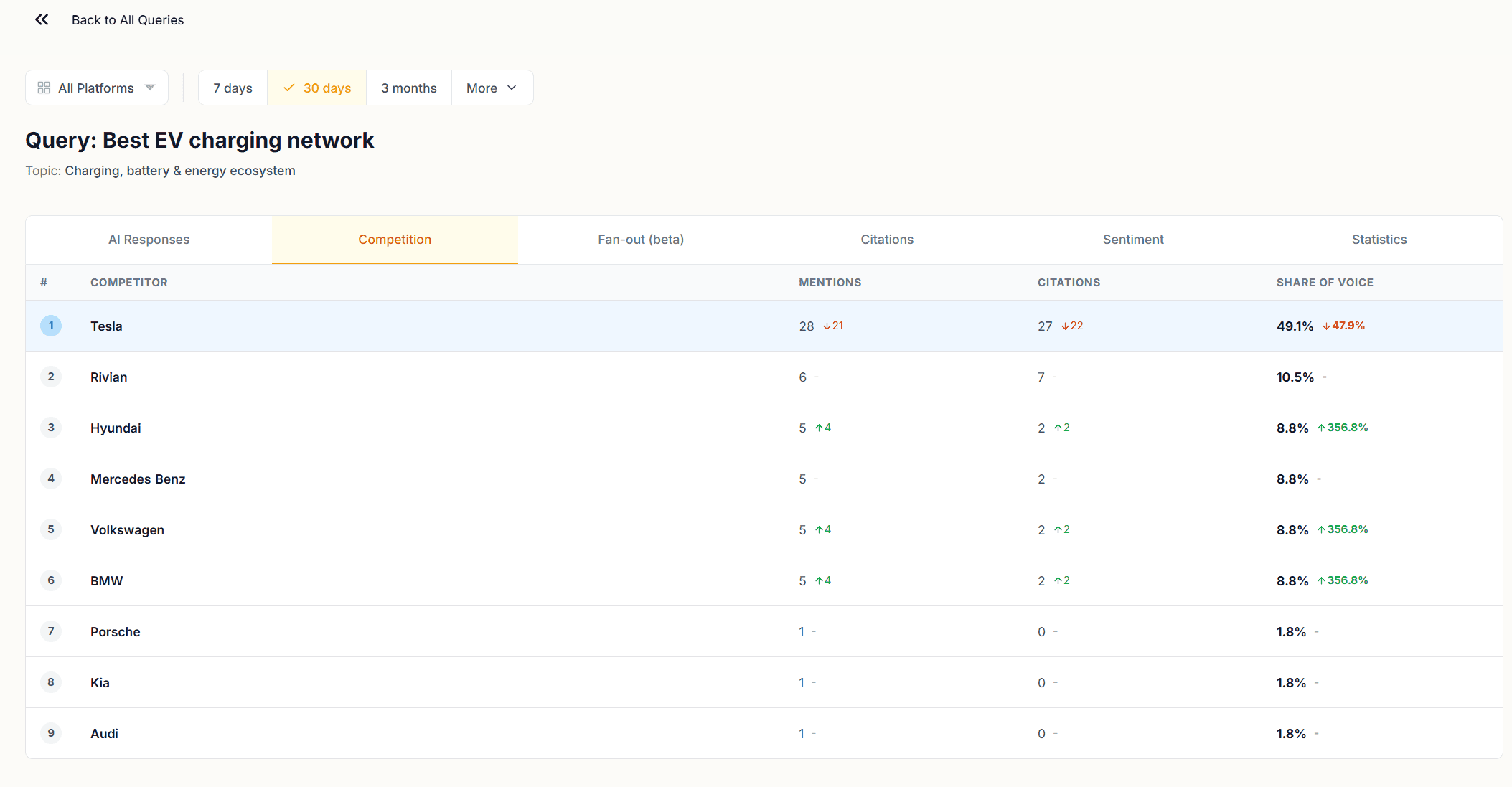

Tesla's visibility on this query dropped roughly 50% across Gemini 2.5 and GPT-5 mini over the tracked period. On a standard dashboard, that's the whole story: a big red number, no explanation. With the fan-out, you can start tracing why.

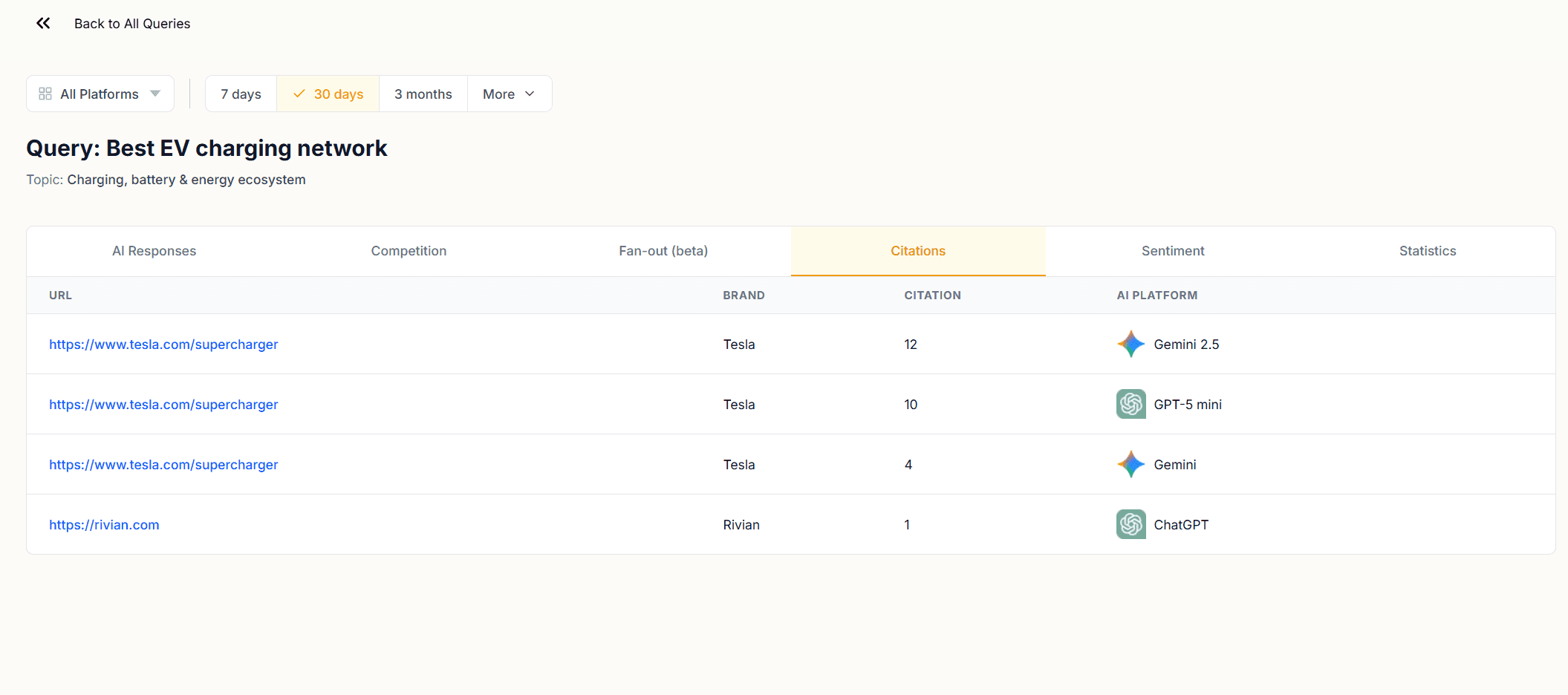

The fan-out shows the AI running sustainability queries, JD Power ranking searches, and comparison searches across regional competitors. Over the same period, Hyundai, Volkswagen and BMW each jumped 356% in share of voice on this query. Tesla's citations, meanwhile, are almost entirely from tesla.com/supercharger, its own domain. No third-party review coverage, no comparison articles, no presence in the external sources the fan-out queries are pulling from.

So the drop isn't mysterious. The AI is increasingly weighting third-party comparison and sustainability content to answer this question, and those searches are returning results that feature competitors with fresher external coverage. Tesla's own pages are still being cited, but the query mix has shifted in a direction where Tesla's content isn't present.

That's a diagnosable problem with a clear direction for a fix, not just a number that went down.

The Fan-out tab surfaces the intermediate search queries behind every AI response we track. Used alongside the Citations tab, it tells you whether a visibility gap is a training data problem or a content freshness problem, two different diagnoses that point to different fixes.

Most visibility tools stop at the score. Operyn's fan-out visibility shows you what drove it.

The Fan-out tab is available now in beta across all monitored queries. Open any query in AI Response Insights and select the Fan-out tab to see the search chain behind your results.

You might also like

Auditing URL Resolution: Defending Gains and Triaging Leaks

Apr 29, 2026

Mapping Threat Vectors: How to Read the Competition Dashboard

Apr 29, 2026

Diagnosing the Mention-to-Citation Leak: How to Read Your Narrative Summary

Apr 28, 2026