The llms.txt Mirage: Why AI Bots are Ignoring Your Site Directives

llms.txt remains an unproven AEO lever because tested AI crawlers ignored the file entirely, making distributed authority, crawlable linked pages, structured content, and consistent third-party signals more important for AI visibility.

KumarM

Writer - Researcher

Update on

AEO Principles

As Answer Engine Optimization (AEO) gains traction, the llms.txt file has emerged as a proposed communication layer between websites and AI. The concept is modeled after the decades-old robots.txt standard: a simple text file that tells Large Language Models how to access, parse, and prioritize your content.

The industry expectation is that providing a markdown-optimized version of your site via this file will help AI systems ingest your data more efficiently, leading to better brand representation in generated answers. However, a controlled experiment by Reboot suggests this entire framework is currently "optimization theater."

The Experiment: A Test of Discovery and Priority

The researchers conducted a rigorous study to determine if AI bots actually interact with llms.txt files. To isolate this variable, they removed common noise factors like domain authority and existing link equity.

Methodology

Isolation: The team created new landing pages on established domains. These pages were orphans, meaning they had zero internal or external links pointing to them.

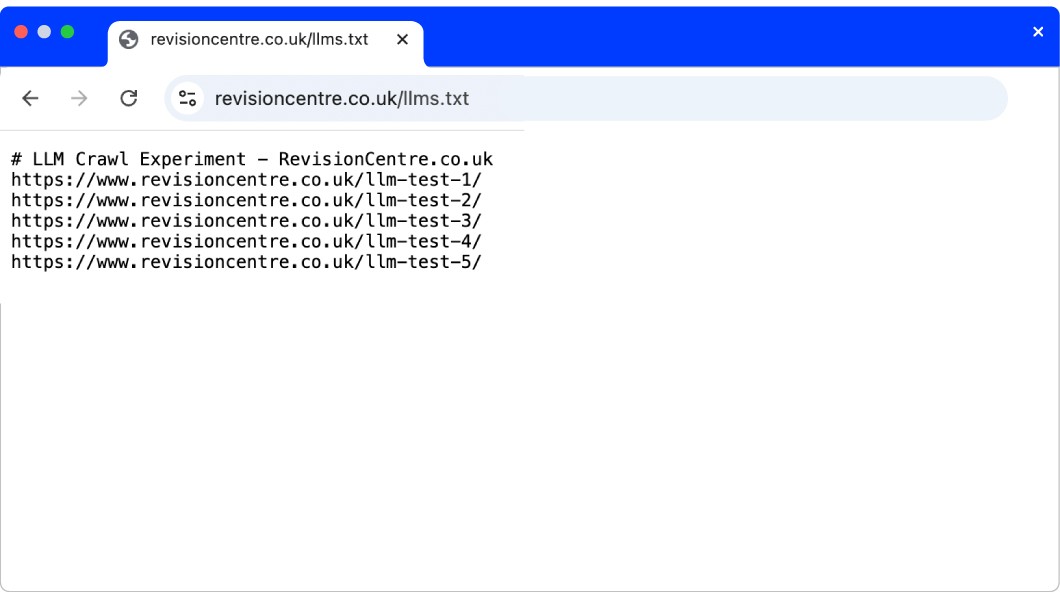

The Single Path: The only place these URLs existed was inside a newly published

llms.txtfile at the root directory.The Monitoring: They tracked server logs for three months on domains where GPTBot, ClaudeBot, and PerplexityBot were already actively crawling other content on the domains.

One LLMs.txt file created for the experiment.

The Findings

The results were absolute. Despite consistent activity from major AI crawlers across the rest of the site, not a single bot requested the llms.txt file during the ninety-day window. Consequently, every orphan page linked within that file remained completely undiscovered.

This proves that while the llms.txt proposal is popular in developer circles, it is not yet integrated into the production-level discovery loops of OpenAI, Anthropic, or Perplexity.

Why Site-Level Controls Fail

The Reboot experiment reinforces a core principle of AEO: your visibility is tied to your footprint across the web, not just your own domain. The failure of llms.txt to trigger a crawl reveals that AI bots do not rely on your site-level navigation to find your content.

The Broken Causal Chain

The SEO industry often assumes a linear path: Access > Indexing > Citation. In reality, AI crawlers are not "exploratory" in the way we hope. They don't check your root directory for instructions on where to go next.

Instead, they follow the existing "scents" of authority and connectivity already established across the web. They aggregate data from third-party sources, cached indexes, and digital PR mentions. If your brand is discussed on a high-authority forum or summarized in a trade publication, an LLM can reconstruct your information without ever touching your server or reading your llms.txt file.

The Illusion of the Gatekeeper

The server logs showed that GPTBot and others were actively crawling the rest of the site's known pages while completely ignoring the llms.txt file. If the experiment had tried to block an existing, well-known page using llms.txt, the result likely would have been the same: the bot would have ignored the file and crawled the page anyway because it already knew the URL existed from external sources. If a page is mentioned elsewhere on the web, the AI will find it regardless of your llms.txt settings.

The llms.txt Mirage: Why AI Bots are Ignoring Your Site Directives

Strategic Implications for Brands

If you are spending engineering resources on llms.txt today, you are likely optimizing for a ghost. True AI visibility requires moving beyond root-level text files and focusing on the layers that actually impact model output.

Shift Focus to Semantic Redundancy

Single-source authority is fragile in the AI age. To increase the probability of being cited accurately, brands must move toward semantic redundancy. This means ensuring your core brand claims and positioning are repeated consistently across multiple third-party sites that the bots are actually crawling.

The Four Layers of Visibility

As defined in the Operyn AEO Technical Checklist, crawler access is only one piece of the puzzle. To be cited by an LLM, your content must pass through four gates:

Discoverability: Can the bot find the page through traditional links?

Accessibility: Is the content crawlable?

Readability: Is the data structured in a way the model can parse?

Comprehensibility: Is the information clear enough for the model to interpret correctly?

Conclusion: From Theory to Observation

The experiment reframes llms.txt as an unproven theory. AI systems do not currently prioritize these files, and relying on them as a primary lever for growth is a strategic error. AEO is not about controlling whether an AI accesses your site. It is about shaping the distributed signals the AI uses to construct its answers.

Operyn provides the diagnostic analytics to see past the technical hype. We measure how your brand is actually cited or omitted across the LLM ecosystem, giving you the data to influence your representation where technical files fail.

Share on social media