Welcome to Part 2 of the Operyn Product Guide Series. (If you are just joining us, start with Part 1: Calibrating Your AI Tracking Environment, or read the Intent-First Framework for the strategic context behind this methodology.)

In Part 1, you segmented your tracked queries into Discovery, Consideration, and Conversion tiers. Before you open your Operyn dashboard, you need to understand what that segmentation changes about how you read the numbers.

Most AI visibility tools report Share of Voice (SOV) as a single, blended percentage. They count every brand mention across every tracked prompt, average it, and hand you a headline metric.

That number includes real signal. But it also includes structural noise, and the two are inseparable without intent segmentation. A blended SOV treats a hard-earned organic mention the same as a mention the AI had no choice but to generate. Unless you can tell them apart, you can’t act on either one.

Intent-First Framework: How to Read SOV Data by Query Type

The Assure-Mention Effect

When a user types a query containing a brand name, the AI is structurally compelled to mention that brand. The model needs to reference it to fulfill the conversational intent. If someone asks “Is Brand A good for enterprise teams?”, Brand A will appear in the response because the prompt demanded it. This is the Assure-Mention Effect. It applies to any prompt that names a specific brand, whether that’s a comparison query, a feature question, or a pricing lookup.

The effect doesn’t make branded prompts useless. You should track them, but you need to read the resulting SOV number differently depending on what the query’s intent tells you about why the mention occurred.

How Intent Changes What SOV Means

The three tiers you configured in Part 1 each produce SOV data that answers a different question.

Discovery queries

Example: “How do I choose the right cycling apparel for long-distance rides?”

No brand names appear in this prompt. If an LLM mentions your brand here, it did so on its own. The model associated you with the problem space based on its training data and retrieval index.

This is the most valuable SOV signal in your dashboard. A mention at the discovery tier represents organic AI mindshare: the model considers you relevant to this topic without being told to.

If your SOV reads 0% across your discovery queries, that’s diagnostic information, not a performance failure. It tells you the brand lacks presence at the awareness layer of the model’s knowledge base. Your content strategy needs to address that gap before you can expect unprompted mentions.

Consideration queries

Example: “Brand A vs Brand B for high-end cycling clothing.”

Both brands appear in the prompt. The Assure-Mention Effect kicks in: the AI will reference both brands to answer the question. An SOV split near 50/50 is the structural default, not a competitive outcome.

The SOV number at this tier tells you very little on its own. The actionable data lives in the framing. When the AI compares the two brands, what criteria does it assign to each? Does it position you as the premium option or the budget alternative? Does it recommend the competitor for your most valuable use case?

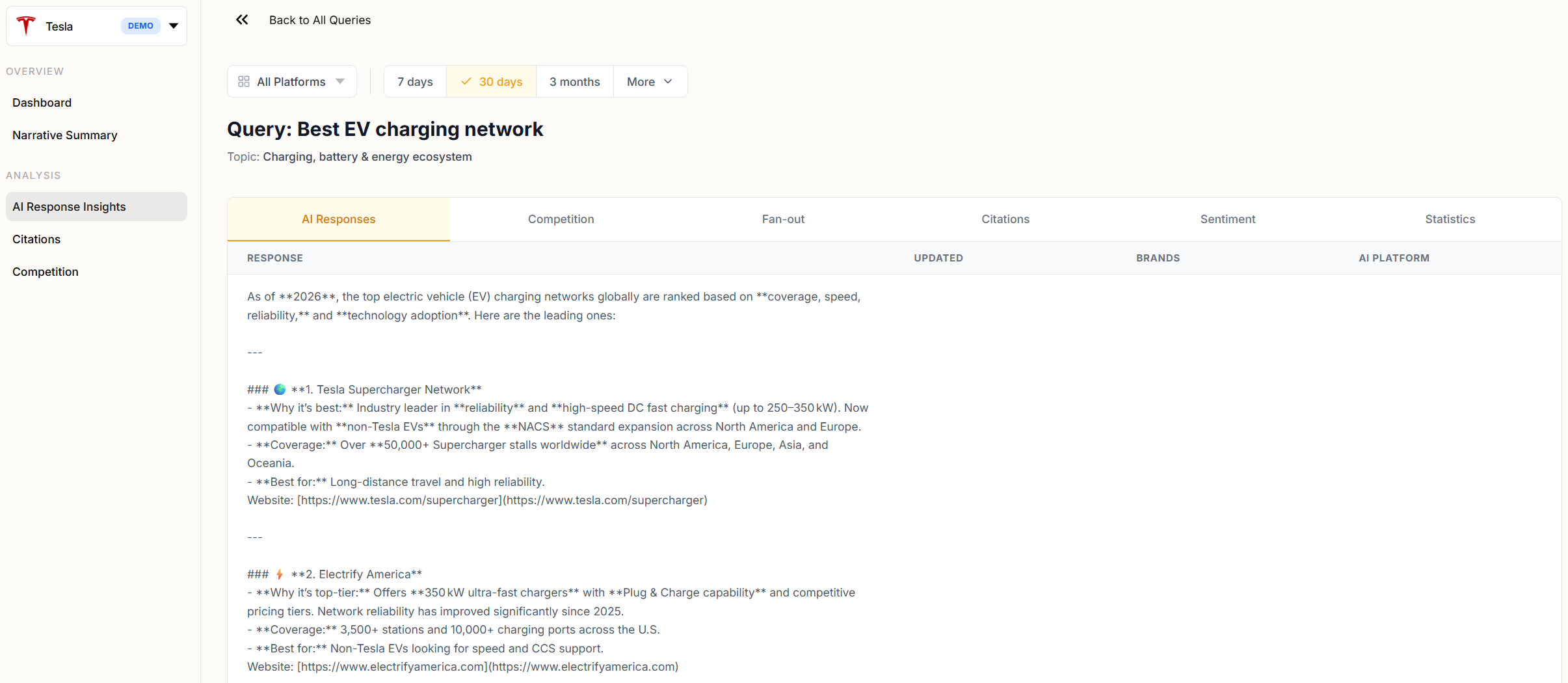

Operyn’s AI Response Insights module surfaces this framing data. When you drill into a query, the response text shows how the AI positions each brand, the Competition tab breaks down SOV against rivals, and the Sentiment tab extracts the specific keywords the model associates with you. This module focuses on reading the baseline metrics; later guides cover how to use that data to build content briefs that shift the model’s framing.

Conversion queries

Example: “What are the hidden fees in Brand A’s contract?”

Your brand is the subject of the query. SOV will read at or near 100% because the entire response is about you. There is no competitive signal in this number.

The metric that matters at this tier is factual accuracy. Is the AI returning your current pricing? Correct feature descriptions? Valid integration partners? An inaccurate response here costs you a conversion. You track conversion queries to catch hallucinated facts before your buyers encounter them.

Why Blending Obscures the Signal

A blended SOV score averages all three tiers into one number. That average combines your earned discovery mentions (hard to get, high signal) with your structurally guaranteed consideration and conversion mentions (easy to get, low signal).

The result is a percentage that overstates your organic presence. Your discovery-tier SOV might be 5%, but if you track enough branded queries, the blended number could read 40%. That 40% can feel like progress, but it isn’t. The 5% is the number your content team needs to move.

This doesn’t mean you should stop tracking branded queries. Consideration queries reveal how the AI frames you against competitors. Conversion queries catch factual errors that lose deals. Both tiers generate the data you need.

The fix is to never read them in aggregate. Evaluate each tier on its own terms:

- Discovery SOV measures organic mindshare. This is the number you benchmark quarter over quarter.

- Consideration SOV is structurally constrained. Ignore the percentage; focus on the framing and sentiment data in the Perception module.

- Conversion SOV is structurally guaranteed. Ignore the percentage; audit the AI’s factual accuracy against your current product specs.

The Intent-First Framework: Application in Operyn

Your AI Response Insights view (under Analysis in the sidebar) shows SOV broken down by query, grouped by topic. The view doesn’t separate queries by intent tier automatically, so apply a simple filter as you scan the list: look at whether the query text contains your brand name. If it does, you’re looking at a consideration or conversion query where the Assure-Mention Effect inflates the SOV number. If it doesn’t, you’re looking at a discovery query where the SOV reflects organic mindshare. That distinction changes whether a 50% SOV is a signal worth acting on or a structural artifact you can set aside.

The AI Response Insights view also groups queries by topic cluster. If a topic cluster performs well on consideration-tier queries but shows no discovery-tier presence, that’s a content gap: the AI knows you exist in that space when prompted, but doesn’t associate you with it organically.

That gap is where your content investment should go. Later modules in this series cover how to use the data to build the specific briefs that close it.

Next in this series: Part 3: The Anatomy of AI Visibility breaks down the difference between Mentions and Citations, introduces the Leakage Gap metric, and walks through how to read the Brand Landscape chart on your dashboard.